Large language models (LLMs) are promising tools for scaffolding students' English

writing skills, but their effectiveness in real-time K-12 classrooms remains

underexplored. Addressing this gap, our study examines the benefits and limitations

of using LLMs as real-time learning support, considering how classroom constraints,

such as diverse proficiency levels and limited time, affect their effectiveness.

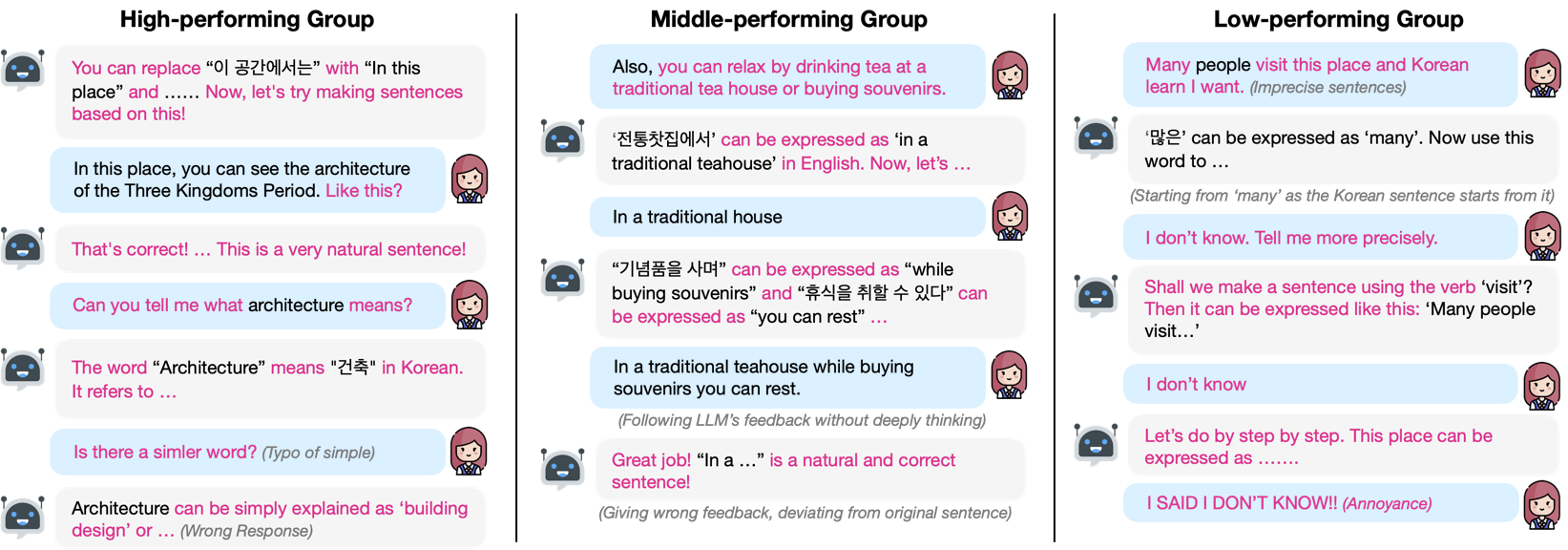

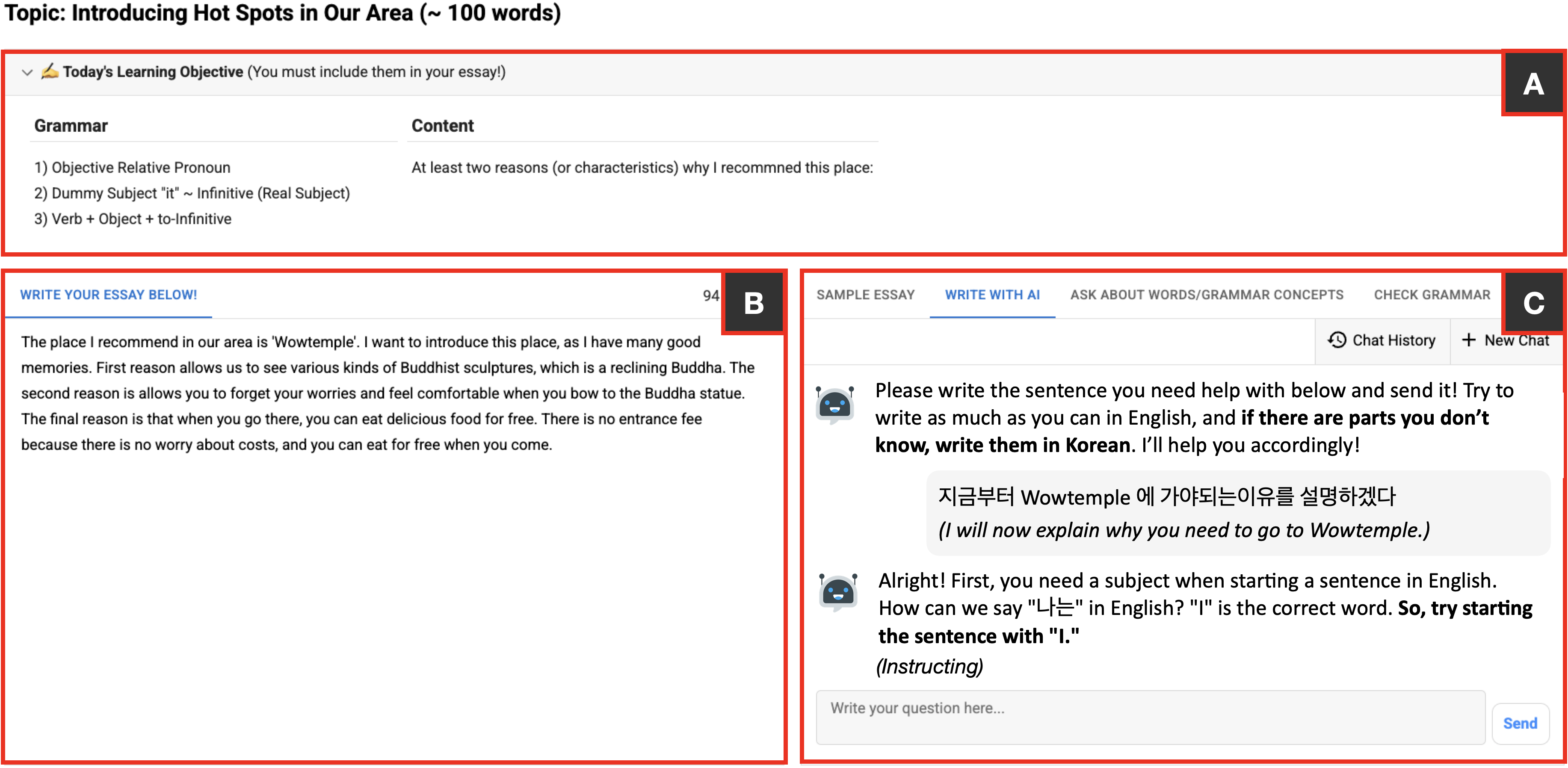

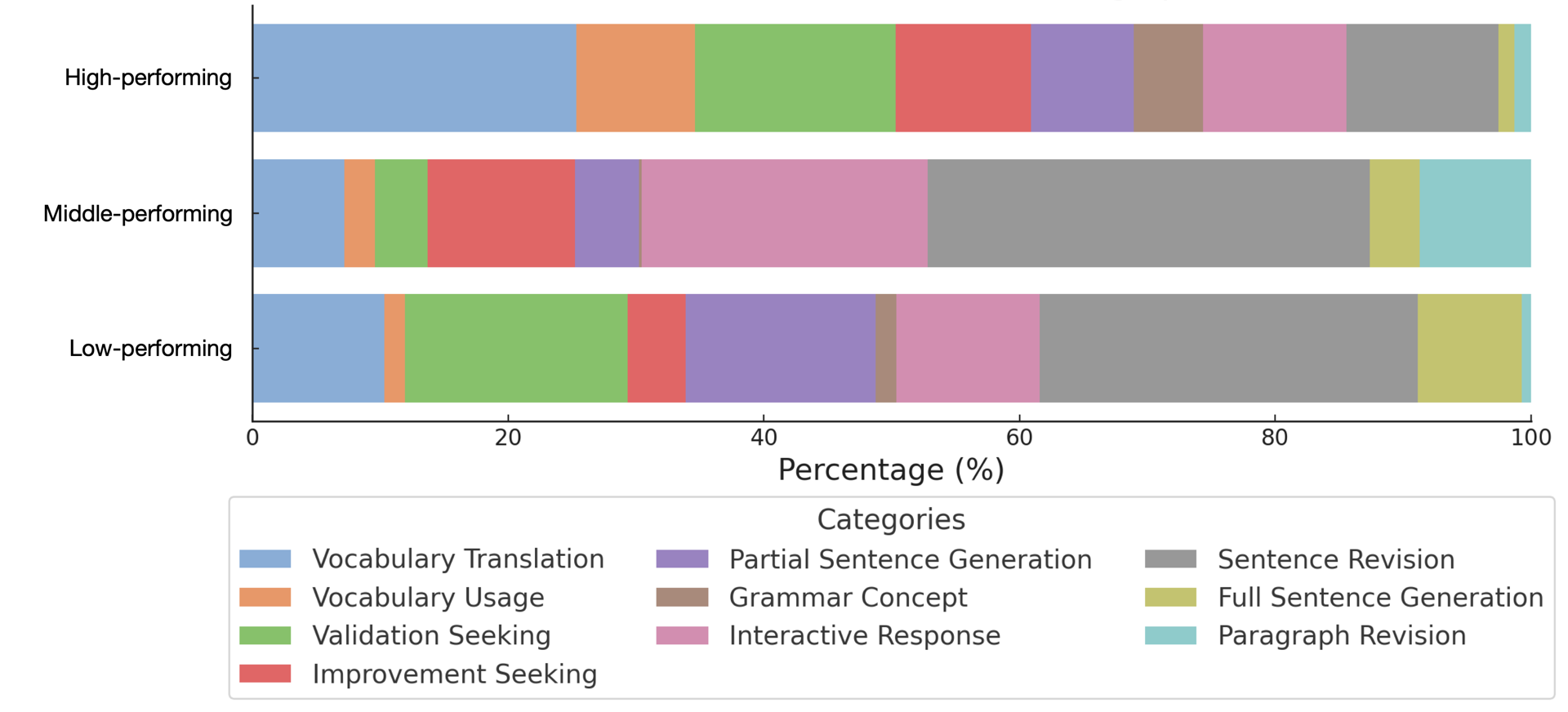

We conducted a deployment study with 157 eighth-grade students in a South Korean

middle school English class over six weeks. Our findings reveal that while scaffolding

improved students' ability to compose grammatically correct sentences, this

step-by-step approach demotivated lower-proficiency students and increased their

system reliance. We also observed challenges to classroom dynamics, where extroverted

students often dominated the teacher's attention, and the system's assistance made it

difficult for teachers to identify struggling students.

Based on these findings, we discuss design guidelines for integrating LLMs into

real-time writing classes as inclusive educational tools.